Benchmarking AI models at Scale

Concurrent: Free LLM benchmarking tool

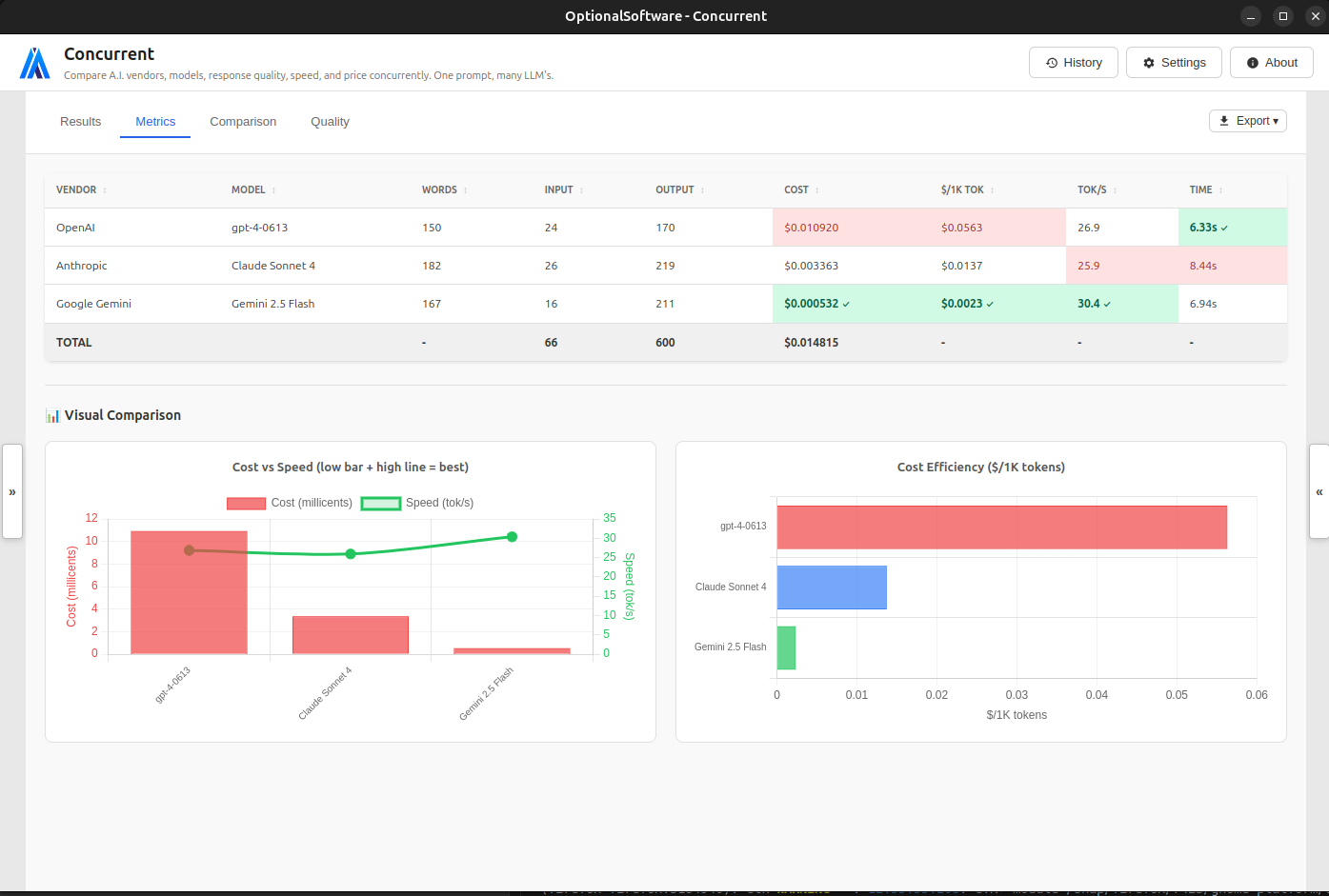

I just stumbled upon this (free) app, called Concurrent, that lets you benchmark different LLMs across speed, cost, and quality.

The app is built by Al Castle, VP of Engineering & Security @ Nerdy and works by sending your prompt to multiple AI models simultaneously, showing you side-by-side comparisons of quality, cost, and speed.

I especially like the feature to measure and compare the quality of AI responses